The suit uses electrical impulses to help muscles perform actions the person has never done before.

Imagine: you approach an unfamiliar machine on a factory floor, and your hands automatically perform the correct sequence of actions — not because you studied the manual, but because the suit on your body is literally guiding every muscle. And this is already a working prototype, created by scientists at the University of Chicago: the system combines electrical muscle stimulation with a multimodal AI. The development has already received the top award at the world’s largest conference on human-computer interaction, but it’s still a long way from being an everyday product. So what can the suit do?

Neuromuscular AI Suit: Design and How It Works

The system was developed by graduate students Yun Ho and Romain Nith under the guidance of researcher Pedro Lopes at the Human Computer Integration Lab (HCintegration) at the University of Chicago. The suit consists of four key components:

- wearable electrodes for muscle stimulation,

- smart glasses with a built-in camera,

- a multimodal AI model capable of simultaneously processing images and text — the same class of technology as GPT-4.1,

- a layer for tracking body movements.

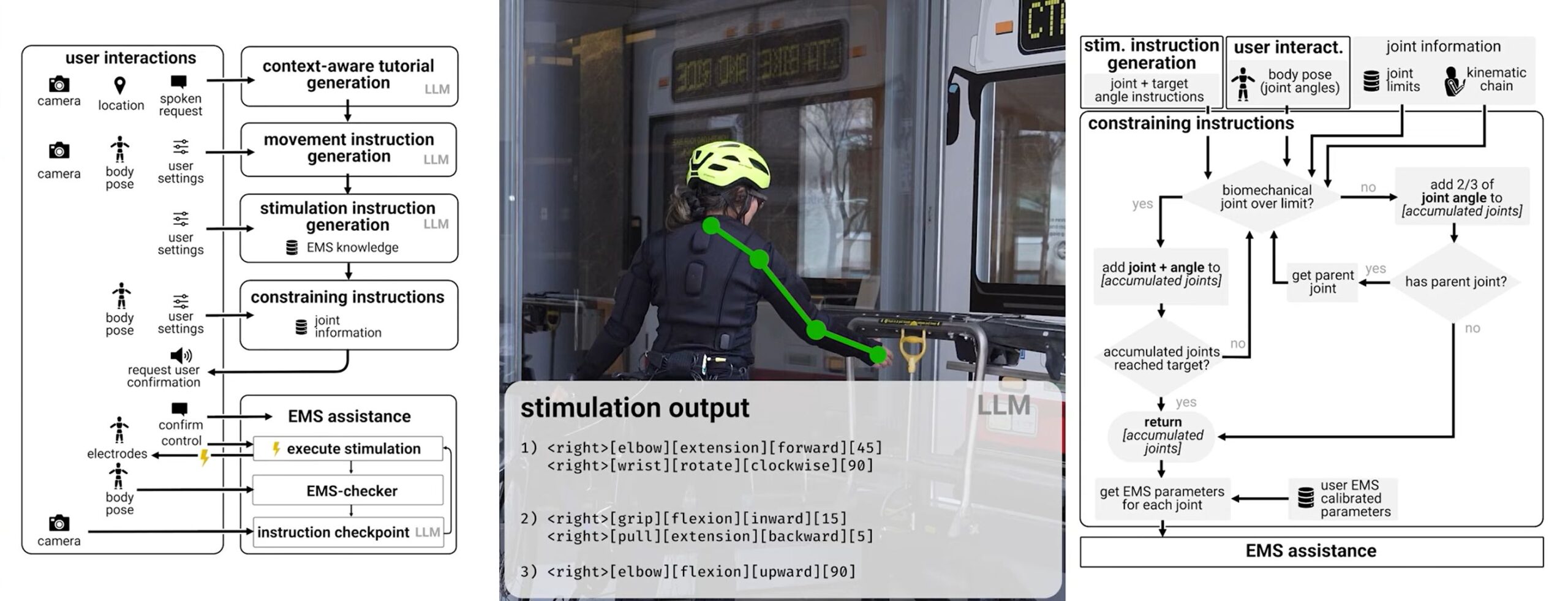

This entire system works in real time. The camera on the glasses sees what’s in front of you. Sensors track body position. The AI analyzes the context and generates instructions — which joint to move, in what direction, and in what sequence. The electrodes then transmit these commands directly to the muscles, making the body perform the necessary movements. And no pre-recorded programs are required.

Algorithm of the smart EMS suit.

AI-Powered Electrical Muscle Stimulation and How It Differs from Standard EMS

Electrical muscle stimulation (EMS) is not a new technology. It has been used for decades in rehabilitation, piano training, sign language instruction, and even fitness. The concept is simple: weak electrical impulses are delivered to specific muscles, causing them to contract. But all previous systems had one fundamental problem — they operated on rigid scripts.

Program such a system to shake an aerosol can before painting — it will shake. Show it a cooking oil spray can that doesn’t need shaking — it will still shake. Context simply didn’t exist for it.

The new system is fundamentally different in that it can reason about context. The camera sees the object, sensors read body posture, and the AI processes everything together, generating instructions adapted to the specific moment and situation. It’s like the difference between a GPS that gives the same route regardless of traffic, and one that reroutes on the fly.

Smart glasses capture what’s in front of the user, the motion-tracking suit reads their posture in real time, and the AI processes all this data to decide which joint to engage, in what direction, and in what order.

How the AI Suit Protects the Body from Dangerous Movements

Between the AI and the human body stands the system’s fourth layer — an anatomical safety filter. Its job: to prevent the artificial intelligence from harming the user. If the model suddenly issues a command to rotate the wrist 180 degrees (which is physically impossible without injury), the system automatically redistributes that movement across multiple joints.

In laboratory tests, the suit with the safety filter made significantly fewer errors than the base AI model without body anatomy awareness. This is critically important: any device capable of moving your body must understand its limits.

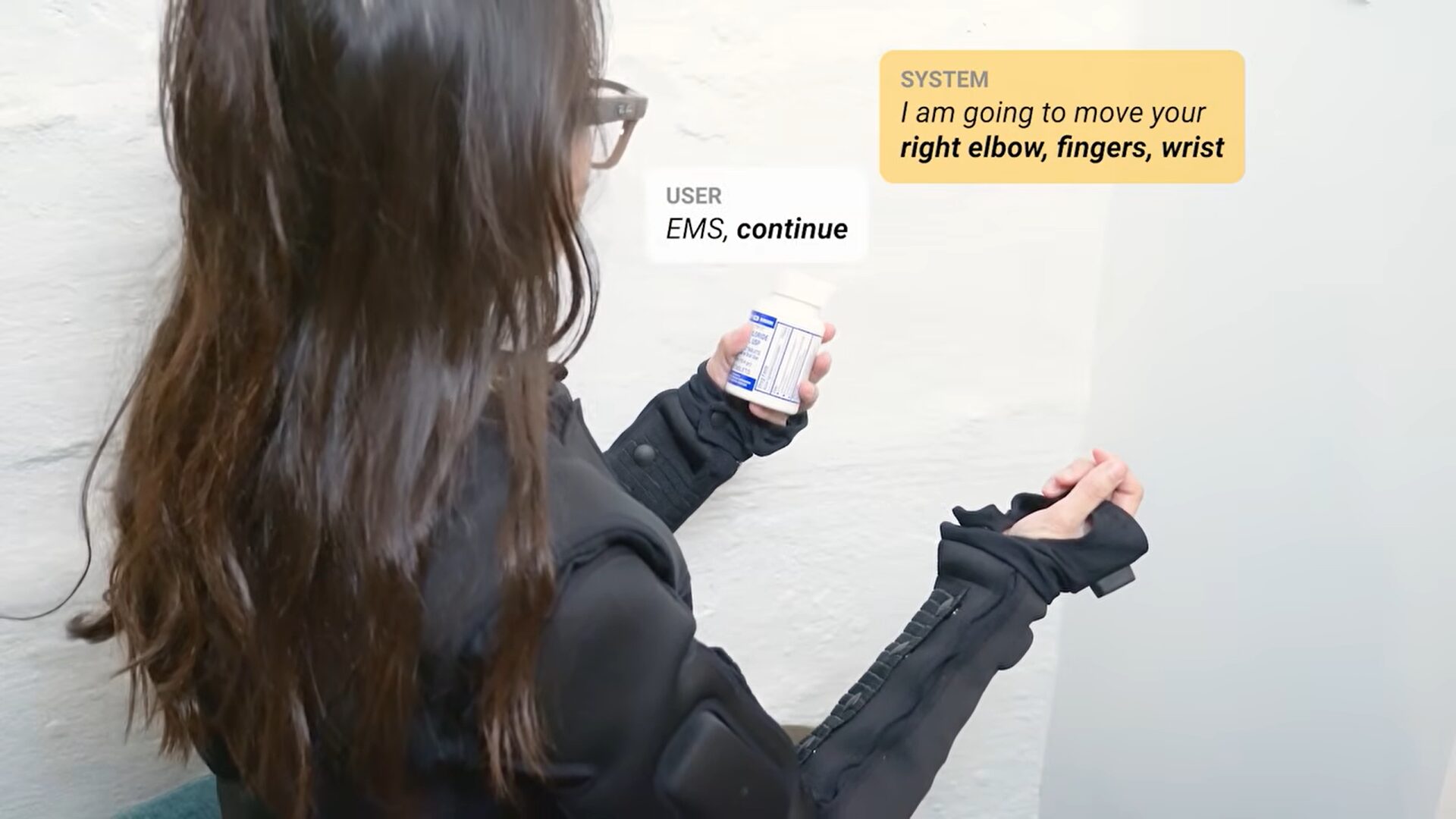

In practice, everything looks simpler than it sounds. The user approaches an unfamiliar window, says: “EMS, help me open this” — and the system identifies the type of handle, then electrically guides the fingers, wrist, and elbow through the correct sequence of movements. If you’re interested, a detailed video can be watched here.

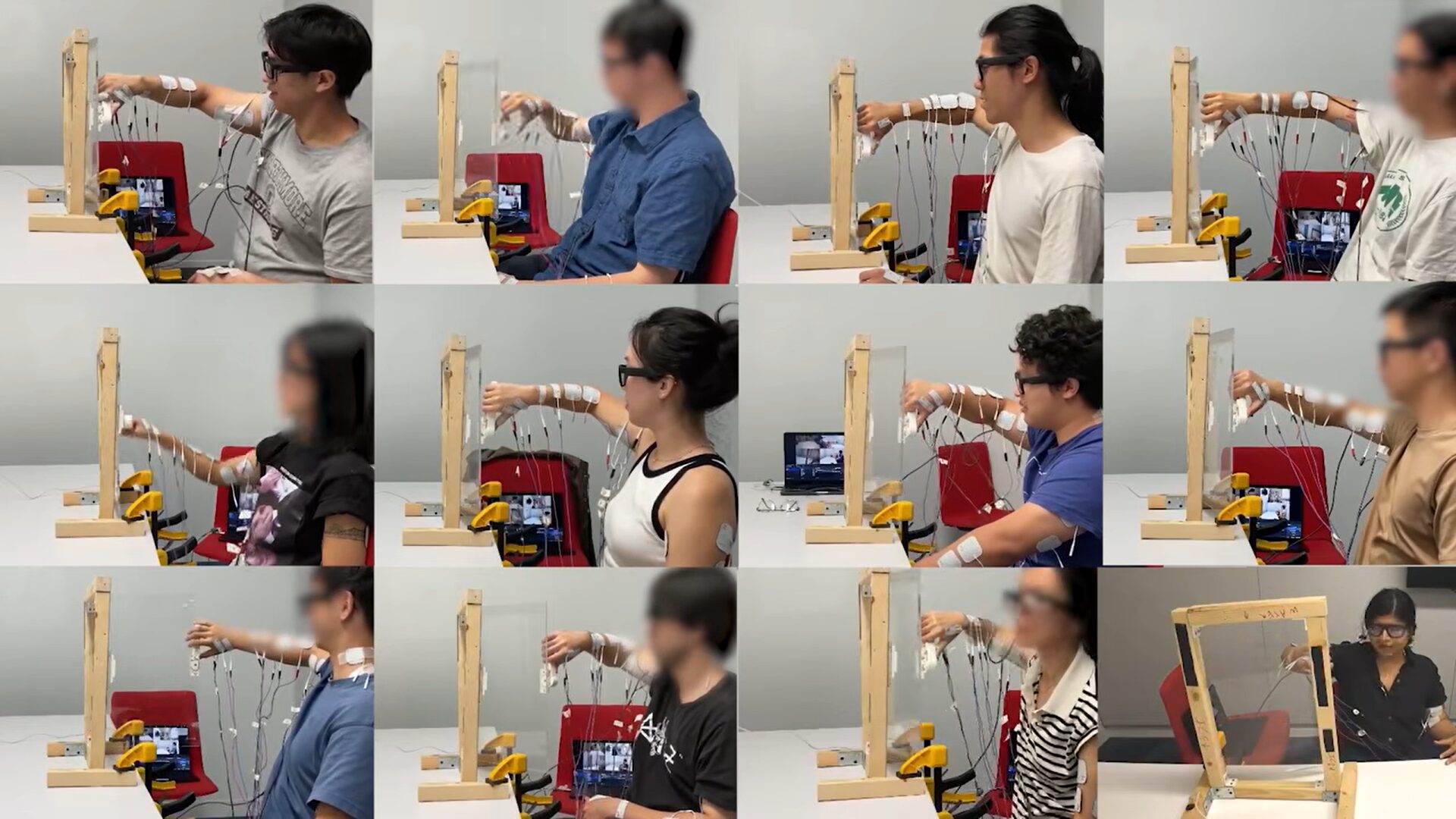

Participants test the system to open an unfamiliar window. The AI identifies the handle type and uses electrodes to guide the fingers, wrist, and elbow in the correct sequence.

Applications of the AI Suit in Medicine and Industry

The team highlights three near-term use scenarios:

- Rehabilitation and physical therapy. The suit can guide patients through safe movements at home, without the constant presence of a doctor.

- Industrial training. In manufacturing, the system can reduce training time and lower the risk of injury when working with unfamiliar equipment.

- Assistance for the visually impaired. Instead of audio descriptions — direct physical guidance, real controlled movement through an unfamiliar environment.

Interestingly, in user tests, when the system intentionally made mistakes, participants noticed them, corrected them with voice commands, and still completed the task. One participant noted that their own body intuition made errors immediately obvious. This is an encouraging sign: the person truly remains “in the loop” of control, rather than becoming a passive puppet.

Why the AI Suit Isn’t Ready for Everyday Use Yet

Despite the impressive demonstration, the researchers honestly acknowledge: it’s still a long way from a mass-market product. Here are the main limitations:

- Electrode calibration must be personalized for each body — there are no universal settings yet.

- The tingling from electrical stimulation can be uncomfortable.

- The system does not build true muscle memory — the deep, practiced skill that only comes with repetition.

A woman gave the command “open this.” The system evaluated and responded, then the command “continue” was given, after which the suit opens the jar.