Can artificial intelligence teach us to understand animals? Experts weigh in

Artificial intelligence already helps us command Siri and Alexa, but what if we directed this technology toward other species? Several major scientific projects are using machine learning to decode animal communication. A full-fledged “conversation” with a cat remains science fiction for now, but the first results are impressive and raise serious questions about the nature of language itself. It’s no surprise that this topic is getting so much attention: scientists have even managed to communicate with a whale in a separate experiment.

Do Animals Have Language and How Are Scientists Investigating This

Before “translating” anything, we need to understand: what exactly are we translating? Humans communicate with words, gestures, and facial expressions. Animals also use complex signals — dogs wag their tails, bees dance, dolphins click and whistle, and elephants, as it turns out, can address each other by name. But can this be considered language?

Denise Herzing, research director of the Wild Dolphin Project, explains the situation this way: we don’t yet know whether animals have a true language, but AI is capable of detecting language-like structures in their communication — elements resembling grammar or vocabulary. If such patterns are found, it would be a strong argument that animals communicate in more complex ways than we thought.

And here the main difficulty arises. Julia Fischer from the German Primate Center warns: AI is not a magic wand. An algorithm can find patterns in sounds, but without behavioral observations in a natural environment, those patterns are meaningless. It’s not enough to simply record thousands of hours of sounds — they need to be correlated with what the animals are doing at that moment. Otherwise, any attempts to talk with animals will remain nothing more than a beautiful metaphor.

How Artificial Intelligence and Machine Learning Decode Animal Sounds

Elodie Briefer, an animal behavior specialist at the University of Copenhagen, explains: animal vocalizations carry multiple types of information — from the identity of the individual to its emotional state, status, and even descriptions of external events. All of this, in theory, can be captured by AI.

A key role is played by machine learning — a type of AI that analyzes data without rigid rules. The algorithm processes recordings and finds patterns on its own. This is the same technology behind predictive text on your smartphone and voice assistants. The difference is that “language models” for animals are built not on words but on sound signals — clicks, whistles, grunts, and ultrasound.

According to Briefer, the advantage of machine learning lies in scale. Where a human would spend years manually analyzing recordings, an algorithm can process thousands of hours and find patterns that a researcher might have missed.

Earth Species Project: Decoding Animal Language

One of the key organizations in this field is the nonprofit Earth Species Project, dedicated to decoding animal communication using AI. Their approach is based on the idea that language can be represented as a geometric shape — something like a galaxy where each “word” is a star, and the distances between stars encode semantic relationships. If the shapes of two languages match, they can be “overlaid” onto each other and thus translated.

In late 2021, the Earth Species Project published a paper in the journal Scientific Reports describing an algorithm that solves the so-called “cocktail party problem.” Imagine a noisy party: multiple voices sound simultaneously, and understanding who exactly is speaking is nearly impossible. The same problem arises when recording sounds in a group of animals. The Earth Species Project algorithm was able to determine which specific dolphin, macaque, or bat was “speaking” in a group.

Researchers analyze spectrograms of animal sounds

Today the project is creating NatureLM-audio — the world’s first large audio-language model specifically designed for analyzing animal vocalizations. It is trained on massive datasets: from human speech and music to environmental sounds. Initial results show that patterns extracted from human speech do indeed help better understand the sounds of other species.

Whale and Dolphin Language Studied by Project CETI and DolphinGemma

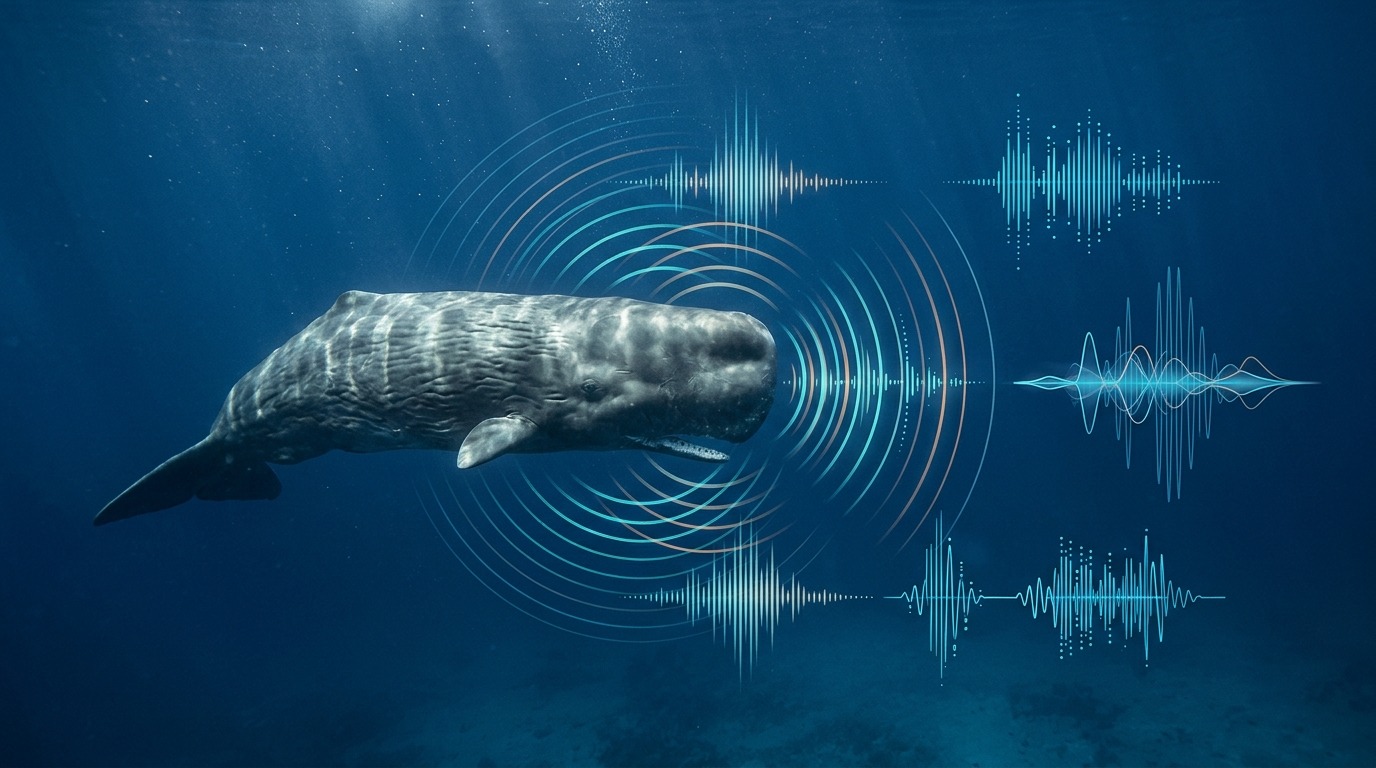

Another major project — Project CETI (Cetacean Translation Initiative) — focuses on sperm whales. These whales communicate through rhythmic series of clicks called “codas.” Previously, scientists considered these sounds to be something like Morse code. Research using generative adversarial networks (GANs) revealed something astonishing: the acoustic properties of sperm whale clicks resemble vowel sounds in human speech — with differences in duration, frequency, and trajectory, just like in humans.

CETI researchers have already identified 156 different codas and their basic components — effectively a “phonetic alphabet of sperm whales.” The project brings together about 50 scientists from eight institutions: linguists, roboticists, cryptographers, and marine biologists.

On the dolphin side, there is news as well. The Wild Dolphin Project, founded by Denise Herzing, achieved a notable result in 2013: researchers taught a group of dolphins to associate a specific whistle with sargassum seaweed. A machine learning algorithm then managed to recognize this whistle in a natural setting. And most recently, Google, together with the Wild Dolphin Project and the Georgia Institute of Technology, introduced DolphinGemma — an AI model based on the Gemma architecture, trained on years of sound data from Atlantic spotted dolphins.

A sperm whale in the ocean: scientists are deciphering the structure of its click-codas

DolphinGemma works on a “sound in — sound out” principle: the model analyzes sequences of dolphin sounds and predicts what sound will come next — much like a language model predicts the next word in a sentence. At the same time, the model is compact enough (about 400 million parameters) to run directly on a Google Pixel smartphone — right in the field, underwater.

How AI Recognizes Animal Emotions by Sound

It’s not just cetaceans that are attracting researchers’ attention. Elodie Briefer and her colleagues trained an AI system to recognize positive and negative emotions in the grunts, squeals, and oinks of pigs. This is not an abstract exercise — understanding the emotions of farm animals can directly improve their living conditions.

The situation with rodents is even more interesting. Mice and rats communicate in the ultrasonic range — their “conversations” are simply imperceptible to the human ear. The DeepSqueak program, developed by scientists at the University of Washington, converts ultrasonic signals into spectrograms (visual images of sound) and analyzes them using neural networks. It turned out that rodents have about 20 types of vocalizations, and they use different “songs” depending on the situation — for example, male mice “sing” differently in the presence of another male versus near a female.

Why Scientists Use AI to Understand Animal Language

Beyond the obvious (finally finding out what your cat really thinks), understanding animal communication has quite practical implications. For domestic and farm animals, it’s a matter of welfare.

“For species that live alongside us, understanding their state is critically important because their well-being depends on us,” says Elodie Briefer, animal behavior specialist.

But the scale of potential changes is much broader. If it turns out that animals truly have elements of language, this could force a reconsideration of our treatment of them — in sports, entertainment, scientific experiments, and agriculture. And it’s not just about communication: growing evidence shows that many species are smarter than we thought. Researchers from Project CETI are already collaborating with lawyers from New York University, studying how discoveries in sperm whale communication could affect the legal status of animals.

There is also fundamental scientific interest. Studying animal communication can tell us about the evolution of language itself.

Denise Herzing adds an even more ambitious perspective: “The tools we are developing for species on Earth may prove useful for distant worlds — if we ever encounter other forms of life.”