A user trying to figure out their symptoms using an AI chatbot

AI chatbots already pass medical exams better than most doctors. And expectations for such systems are high: services are already appearing that promise to recognize diseases from symptoms faster than a doctor. But when ordinary people try to use them to figure out their symptoms, the result turns out to be no better than without any AI at all. A large-scale study published in Nature Medicine showed for the first time exactly where the chain breaks between the model’s knowledge and real benefit for the patient. And the reason turned out to be unexpected: the problem isn’t with AI’s knowledge, but with how people talk to it.

How AI Makes Diagnoses: Results of a New Study

The study was conducted by scientists from the University of Oxford together with MLCommons and other institutions. Nearly 1,300 participants received descriptions of ten typical medical situations and were randomly assigned: some used chatbots (GPT-4o, Llama 3, and Command R+), while others used any familiar information sources (control group).

After communicating with the bot, participants were asked two things: what disease could explain the symptoms and where to seek help. When the same chatbots were tested “on their own,” without a human, they identified the correct disease in 94.9% of cases. But when real people worked with the bots, accuracy dropped to less than 34.5%. Moreover, participants in the AI group performed no better than the control group, which didn’t use chatbots at all.

In other words, a chatbot that brilliantly answers exam questions turned out to be useless when an ordinary person was at the keyboard. And this makes the topic even more confusing, because isolated cases where ChatGPT was able to make a diagnosis where doctors couldn’t help for a long time only strengthened people’s faith in “medicine through chat.”

Why AI Passes Medical Exams but Doesn’t Help Patients

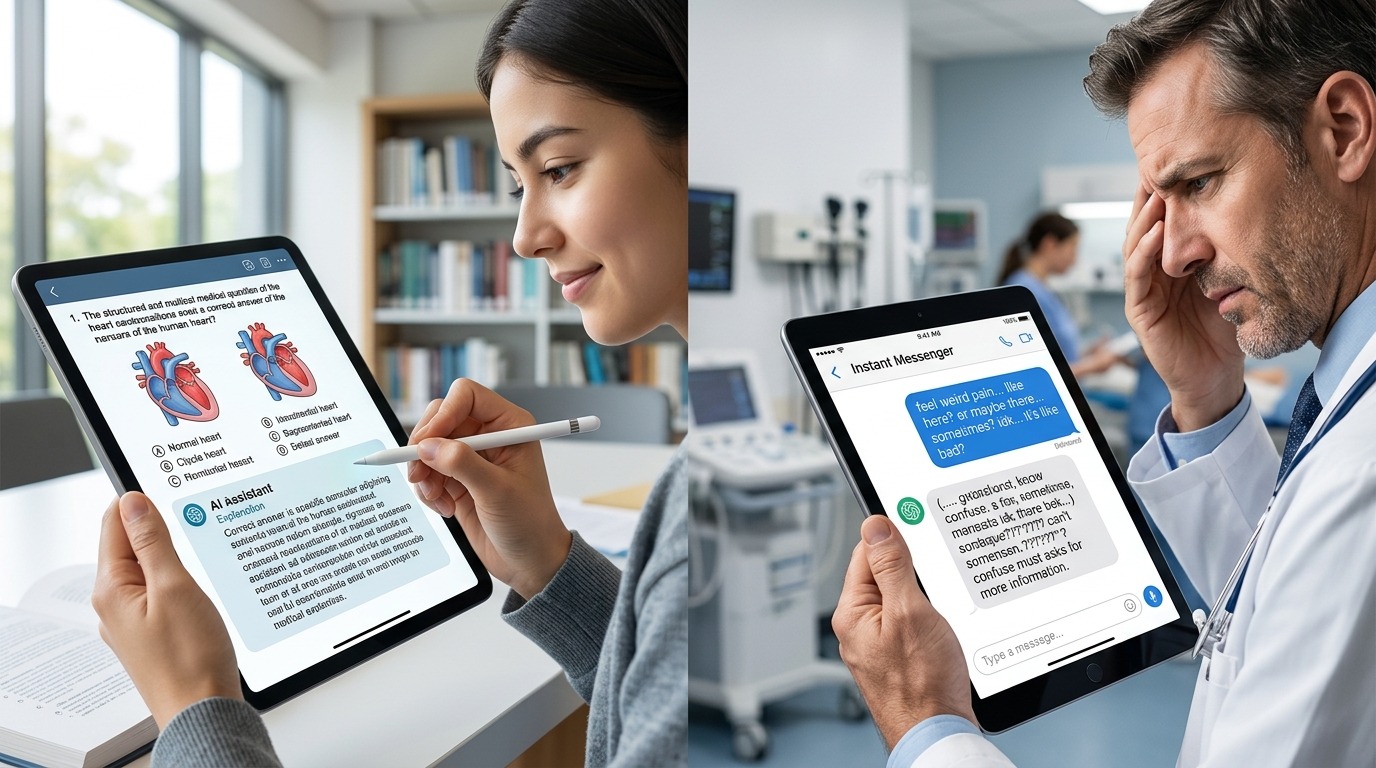

Here’s the paradox: language models already score nearly perfect marks on medical licensing exams. A meta-analysis of 120 trials showed that GPT-o1 achieves 95.4% accuracy on medical license questions, DeepSeek-R1 — 92%, GPT-4o — 89.4%. Simply put, these models know medicine better than many medical school graduates.

But an exam is not a doctor’s appointment. On an exam, the model receives a clearly formulated question with all the necessary data. In real life, everything is different. When researchers studied the conversation transcripts, they discovered: the bot often mentioned the correct diagnosis somewhere in the conversation, but users didn’t notice or remember it. In other cases, people provided incomplete information, and the bot misinterpreted key details. The problem wasn’t with medical knowledge — but with communication between human and machine.

Imagine: you have an encyclopedia with the correct answer, but it’s written in such a way that you flip past the right page. The knowledge is there — but it can’t be conveyed. The risk is even higher because the bot often agrees with the user instead of arguing, clarifying details, and conducting the conversation like a doctor during an appointment.

Comparison: AI answers accurately on an exam, but loses context in real dialogue

Why AI Misunderstands User Symptoms

Unlike simulated tests, real people didn’t give bots all the relevant information. And on top of that — they had difficulty interpreting the options suggested by the chatbot, misunderstood, or simply ignored its advice.

Problems in “human — AI” communication can be divided into several types:

- Incomplete description of symptoms. Patients don’t know which details are important and skip key facts — unlike a doctor who knows how to ask clarifying questions.

- Loss of needed information. The bot might have named the correct diagnosis in the middle of the conversation, but the user didn’t notice it among the stream of text.

- Incorrect interpretation. People interpreted the bot’s recommendations in their own way, sometimes — directly opposite to what it meant.

Some experts point out that bots should ask clarifying questions themselves — as doctors do. “Is it really the user’s responsibility to know which symptoms to highlight, or is it partly the model’s job to know what to ask?” — the researchers note.

Why a Doctor Understands a Patient Better Than AI

There is a fundamental difference between how a doctor communicates with a patient and how a chatbot does it. Medicine is often called more of an art than a science. A consultation isn’t just about determining the correct diagnosis: it includes interpreting the patient’s history, working with uncertainty, and shared decision-making.

For this purpose, the Calgary-Cambridge model has existed for decades — a method of structuring medical consultations that covers everything: from the beginning of the appointment and information gathering to explaining results and jointly planning treatment. This approach involves building trust with the patient, gathering information through precise questions, understanding their concerns and expectations, clearly explaining findings, and agreeing on a plan of action. All of this relies on human connection, adaptive communication, clarifications, gentle guiding questions, context-aware judgments, and trust. These qualities cannot be reduced to pattern recognition.

In other words, doctors are taught not just to know the answer — but to be able to “extract” it from a patient who doesn’t always understand what’s happening to them. Chatbots still can’t do this.

A doctor reviewing a patient summary prepared by an AI system

Where AI Is Already Genuinely Useful in Medicine Today

Does all this mean that AI is useless in healthcare? No. But according to the study, none of the tested chatbots are “ready for deployment in direct patient care.”

The study’s authors suggest thinking of chatbots not as doctors, but rather as assistants: they excel at organizing information, creating summaries, and structuring complex documents. It’s in such tasks that AI is already bringing real value in medicine — for example, in composing clinical notes, summarizing medical histories, or preparing referrals. In narrow tasks, AI can already predict cancer in advance when it works not with free dialogue but with structured medical data.

One in six American adults already turns to AI chatbots for medical information at least once a month, and this number continues to grow. Meanwhile, major developers — OpenAI and Anthropic — have already released specialized medical versions of their chatbots, and experts believe they may show different results in similar studies. But for now, this is only hope.

The main lesson of this study is the gap between benchmarks and reality. Passing an exam and helping a real person are different tasks. Just as passing a theoretical driving test doesn’t make someone a good driver, brilliant results on medical tests don’t turn a language model into a reliable diagnostician. This requires empathy, adaptability, and the ability to work with what the patient cannot or does not want to share. For now, these qualities remain human territory.