About 8,000 neurons were simultaneously recorded from each mouse. When they say “I see right through you” — scientists mean exactly this.

If you close your eyes and recall a friend’s face, something like a blurry photograph flashes in your mind. Now imagine that someone else could see that “photograph.” It sounds like science fiction, but British scientists have done exactly that — though so far only with mice. They reconstructed 10-second video clips based solely on neuronal activity in the rodents’ brains.

A New Way to Read Minds

In recent years, researchers around the world have been trying to “reverse” the brain’s work: take neural signals and convert them back into digital pixels. Previously, fMRI was used for this — a method that tracks blood flow to different areas of the brain. The problem is that fMRI sees the brain in “broad strokes”: it captures the activity of large groups of neurons at once, losing a great deal of detail in the process.

A team of British scientists took a different approach. Instead of fMRI, they used single-cell recordings from the mouse visual cortex. This is a fundamentally different level of precision, allowing observation of each neuron individually. As lead author Dr. Joel Bauer explained, existing methods generalize poorly to new situations that were not specifically tested. Therefore, the team wanted to develop an approach that would capture what is actually represented in the brain and compare it with reality.

How Scientists Read a Mouse’s Thoughts

At the core of the method lies the so-called Dynamic Neural Encoding Model (DNEM). Simply put, it is a predictive model that learns to anticipate how each individual neuron will respond to a specific video frame.

But there’s a nuance: the model accounts not only for the video itself but also for the mouse’s physical behavior — body movements and pupil dilation. The reason is that the animal’s internal state directly affects how it perceives an image. If the mouse is actively moving or its pupils are dilated, neurons respond differently than when it is calm.

To track which specific cells were “activated,” the researchers recorded local spikes in calcium levels — a reliable marker of neural activity. Then, the actual brain activity was compared with what the model would have predicted for a mouse looking at a blank screen. Starting from a “blank canvas,” the algorithm gradually updated pixels based on deviations from the predicted activity until the result began to match what the mouse was actually seeing.

What Thoughts Did Scientists Read

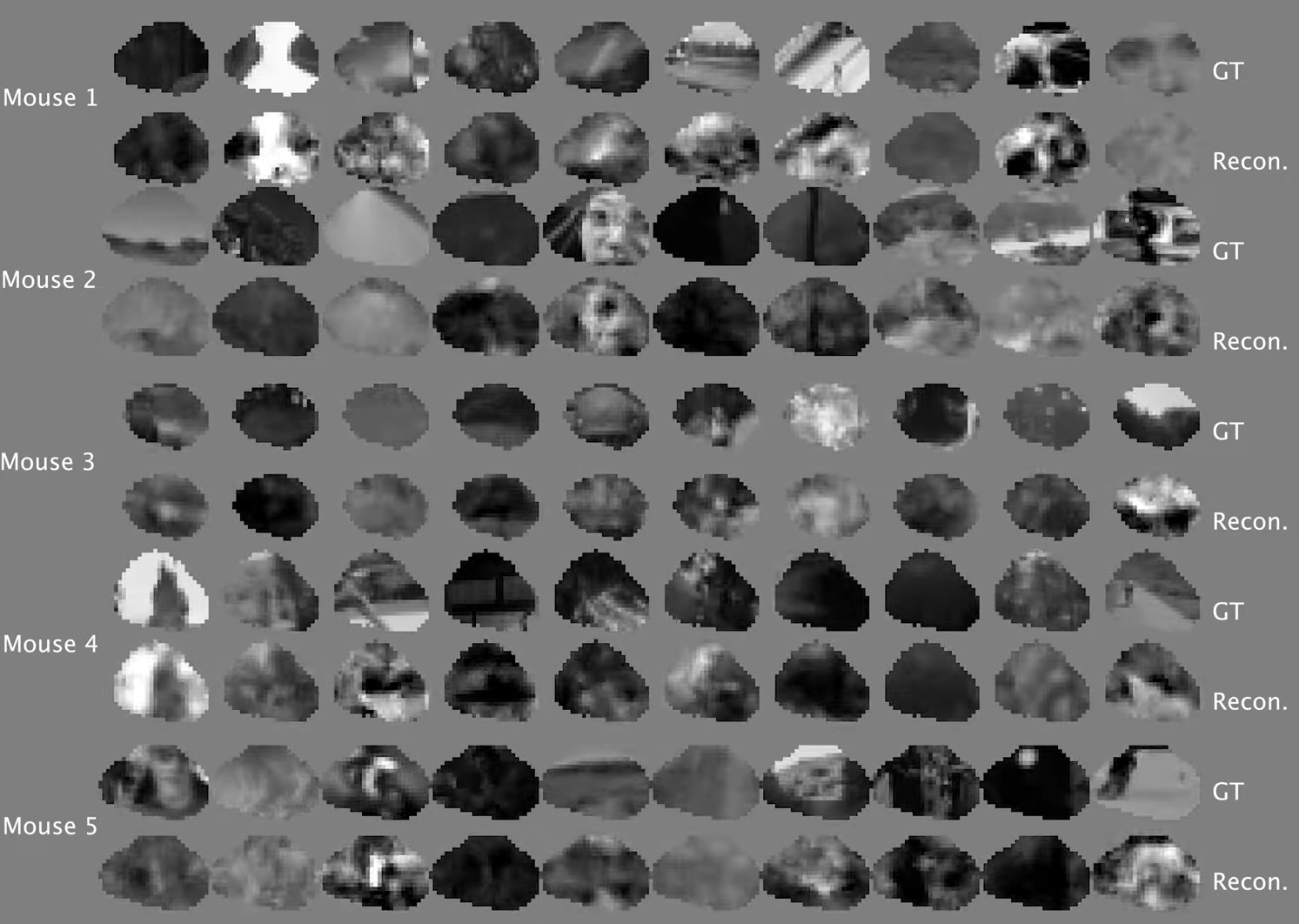

After the model was trained, the most exciting stage began. Scientists showed the mice an entirely new video that was not used during training and attempted to reconstruct it based solely on neural signals. And they succeeded.

Dr. Bauer noted that reconstruction quality directly depends on the volume of data. The more individual neurons that could be tracked, the more accurate the resulting video became. In the experiment, activity was recorded from approximately 8,000 neurons in each mouse’s visual cortex.

Accuracy was verified using pixel correlation — a statistical method that compares the original video and the AI-generated version frame by frame. For comparison, previous attempts to reconstruct static images from the mouse visual cortex showed a correlation of 0.24. The new method achieved a correlation of 0.57 — roughly twice as good, and this time involving not still images but full video.

Mouse thoughts that scientists managed to decode. Image source: YouTube channel

Mouse EyeSpy

How the Brain Memorizes the Surrounding World

Perhaps the most surprising discovery lies not in the clarity of the video but in its errors. It turns out that a mouse’s brain (just like ours) does not work like a perfect camera. It doesn’t record reality “as is” but actively interprets it.

As Dr. Bauer explained, we don’t have a perfect representation of the world in our heads. The visual pipeline distorts and warps our perception, modifying information. But this deviation between reality and brain representations is not an error — it’s a useful function. The brain amplifies some signals and ignores others to help the animal survive.

In other words, the brain is not a passive recorder. It’s more like an editor that decides what’s important and what can be discarded. And this “editing” of reality likely didn’t emerge through evolution by accident.

Why Mind Reading Is Needed

Currently, the team is focused on improving the resolution of reconstructions. Scientists want to achieve a wider field of view and a sharper picture — to literally see the world as the brain “conceives” it, with all its distortions and fluctuations.

But the practical prospects extend far beyond the laboratory. This technology could help us understand how different animal species perceive the surrounding world in their own unique ways. It could also shed light on visual impairments and neurological diseases in which the brain’s “editing” malfunctions.

The ability to literally “peek” through someone else’s eyes sounds fascinating, but what matters more is this: for the first time, scientists have obtained a tool that allows them to understand not just what the brain sees, but exactly how it distorts reality. And that is arguably far more interesting than any perfect copy.