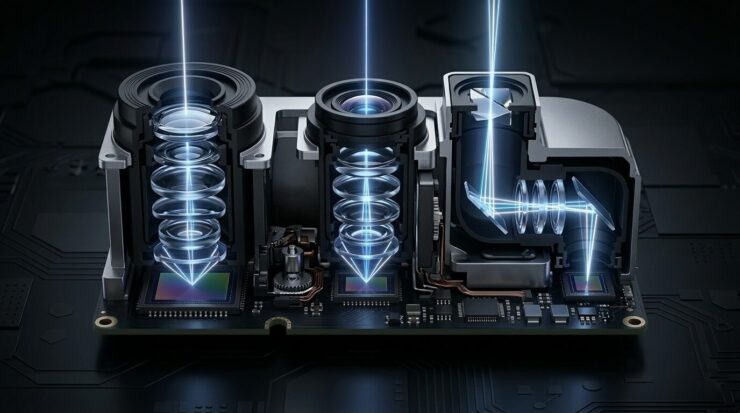

You’ve probably wondered why there’s such a large square block on the back of the iPhone with three “eyes.” Many people think these are just three different zooms, but in reality, it’s much more interesting. Each of the three modules is a full-fledged separate camera with its own lens, set of lens elements, and sensor, and they all work as a unified system that decides which “eye” to use right now.

How the 3 cameras in iPhone actually work and what happens to light inside

Why the iPhone Needs Three Cameras

The answer lies in physics. A single camera with one fixed lens physically cannot simultaneously capture wide landscapes and distant objects with good quality. After all, the more light you collect, the better the shot turns out — and we’ve long hit the limit of what one tiny lens can do.

Three cameras and a mysterious black dot nearby — that’s the LiDAR scanner, which builds a depth map using laser pulses.

In the iPhone 16 Pro, for example, there are three rear cameras: a main wide-angle at 48 MP with an f/1.6 aperture, an ultra-wide at 48 MP with an f/2.2 aperture, and a periscope telephoto at 12 MP with 5x optical zoom. Each one handles its own shooting scenario. The main camera is for everyday shots, the ultra-wide is for landscapes and macro photography, and the telephoto is for zooming into distant objects without losing quality.

But the main trick isn’t in the quantity — it’s in how these three cameras work together. Behind each lens sits a separate sensor (light-sensitive matrix), and together they form a unified system that decides when and which module to engage.

What Do Lenses Do in a Smartphone

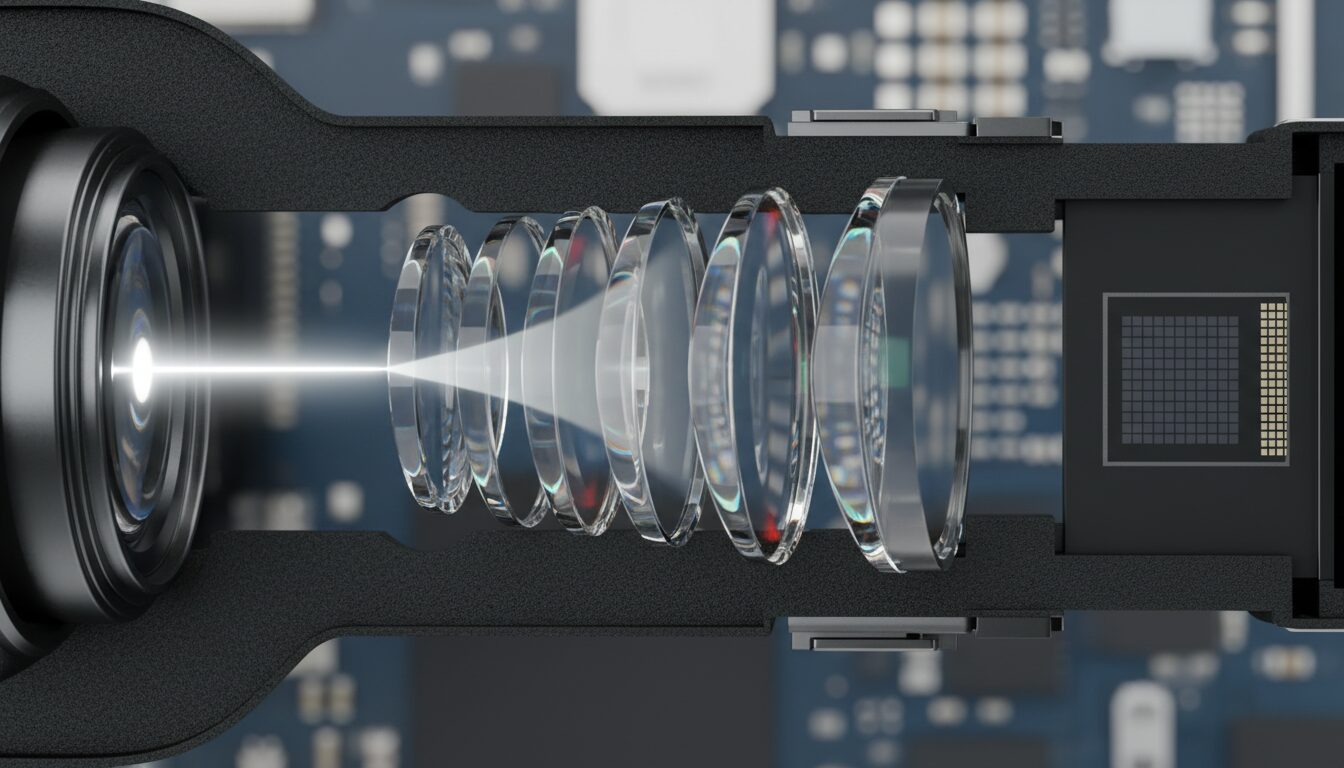

Any photograph is about working with light. When you press the shutter button, light passes through the camera lens and hits the sensor, forming an image. But between “pressed” and “got a photo,” there’s an amazing journey of photons through several layers of glass and plastic.

Each lens in the iPhone consists of several lenses called elements. As light passes through each element, it refracts — changes direction. This is refraction, and all optics are built on it. Simply put, it’s the same effect that makes a spoon in a glass of water appear broken: light travels at different speeds in different media.

In a vacuum, light travels at a speed of nearly 300,000 km/s. In air, it slows down slightly. In glass, noticeably so: the speed drops to 200,000 km/s and below, roughly a third slower. It’s precisely this difference in speed that causes rays to deflect when transitioning from air into a lens and back.

It turns out that in large cameras, lenses are made of glass, but in smartphones, plastic is used. It’s easier to manufacture complex shapes from plastic — in particular, aspherical lenses with a characteristic “bump” in the center. This shape helps refract rays uniformly across the entire lens surface and reduces distortion at the edges of the frame.

Six or seven plastic lenses inside one tiny module — and each one works to make your shot sharp.

How iPhone Switches Between Cameras

When you move the zoom slider from 0.5x to 5x in the camera app, it seems like one lens is smoothly zooming in. In reality, the system decides which physical module will deliver the best image right now. It analyzes the amount of light, the distance to the subject, the presence of motion — and switches between cameras so seamlessly that you don’t even notice.

Here’s how it works in practice. At 0.5x, the ultra-wide camera is working — it captures the widest possible scene.

At 1x, the main 48-megapixel module kicks in. And the clever intermediate 2x zoom isn’t a separate camera but a virtual telephoto lens: the system takes the central portion of the 48-megapixel frame and crops it, producing a full 12-megapixel shot without digital zoom. This delivers optical quality at a 52mm focal length — ideal for portraits.

At 5x, the periscope telephoto activates. But there’s a catch: if light is scarce, the camera may skip the telephoto and instead use a digital crop from the main module. The thing is, the small 12-megapixel telephoto sensor collects less light, and in the dark, the main camera will do a better job.

Another non-obvious feature is automatic switching for macro photography. Bring your iPhone right up to a flower, and the system will seamlessly switch to the ultra-wide module, which can focus at a minimum distance. A world that a regular lens can’t capture will open up before you.

Lens Flares on iPhone Photos — Where Do They Come From

Every time light passes through a lens, some of it doesn’t refract but reflects. For camera manufacturers, this is a problem: the more reflected light there is, the less reaches the sensor. And reflections also create flares and “ghost” images — those green dots that appear in photos when shooting bright light sources.

To combat this, special nano-coatings are applied to each lens — microscopically thin layers that reduce reflection. They also improve contrast and color accuracy. But completely eliminating flares is impossible — it’s physics, not a defect. Even professional cinema lenses costing tens of thousands of dollars suffer from flares.

The purple fringe around silhouettes is that very chromatic aberration. Modern iPhones handle it quite well, but completely defeating physics is still impossible. Image: petapixel.com

Another problem is chromatic aberration. White light consists of waves of different lengths (colors), and when refracted, they split apart like a rainbow. In a lens, this manifests as colored fringes around high-contrast objects — purple or yellow halos. To combat this, manufacturers use special types of glass with low dispersion that refract light without splitting it into a spectrum.

Finally, there’s distortion — the warping of straight lines. The ultra-wide camera is especially prone to barrel distortion: lines in the center of the frame bulge outward. The iPhone corrects this with software right at the moment of capture, and you don’t even notice the correction.

What Is LiDAR and How Does It Work

Optics is only half the story. The other half is software processing and additional sensors. In the iPhone, alongside the three cameras, there’s a LiDAR scanner — that very mysterious black dot next to the lenses.

LiDAR works on the “time of flight” principle: it fires hundreds of infrared laser pulses, they bounce off objects and return. Based on the return time, the system builds a depth map of 256×192 points, which updates up to 60 times per second. For you, this means instant autofocus in the dark and precise background blur in portrait mode.