The new Nano Banana 2 neural network is worth trying for everyone

Image generation using neural networks has become something commonplace over the past couple of years — roughly like a calculator on your phone. But speed and quality of the result still force you to choose: either fast but mediocre, or slow but beautiful. Google decided it was time to stop choosing and released Nano Banana 2 — a model that generates studio-quality images in 4K resolution almost instantly.

What Is Nano Banana 2

Let’s start with the main question that puzzles many: what does a banana have to do with it? In fact, Google has long used quirky internal code names for its projects, and Nano Banana is no exception. This is a family of compact image generation models built on the Gemini Flash architecture.

The first version of Nano Banana could already create images of decent quality, but it had limitations: relatively low resolution and noticeable delay when processing complex prompts. Nano Banana 2 is a full-fledged second generation, in which Google engineers reworked the model’s architecture from scratch.

The main feature is support for generation at resolutions up to 4K, which previously was the prerogative of heavy models like DALL-E 3 or Midjourney in maximum modes. At the same time, Nano Banana 2 works significantly faster than competitors. For comparison, generating a single 4K image with Midjourney takes about 30–60 seconds, while Google’s new model completes it in just a few seconds.

The Nano Banana 2 neural network processes photos in just a few seconds. Image source: blog.google

How the Nano Banana 2 Neural Network Works

The secret lies in the Gemini Flash architecture — a lightweight version of the large Gemini model that Google optimized specifically for tasks requiring minimal latency. While typical generative models work on the principle of “think first, then draw,” Nano Banana 2 uses so-called streaming generation. Simply put, the model begins constructing the image before it has fully processed the text prompt.

Additionally, the engineers applied a knowledge distillation technique — where a large, “heavy” model teaches a smaller one to reproduce its results. Nano Banana 2 effectively “learned” generation quality from the senior Gemini model while maintaining a compact size and high processing speed. This means it can run not only on powerful servers but also on relatively modest hardware.

It turns out that the key breakthrough was optimizing work with latent space — that mathematical “map” in which the neural network stores representations of visual objects. Nano Banana 2 navigates this space significantly more efficiently than its predecessors, which provides the speed boost without sacrificing detail.

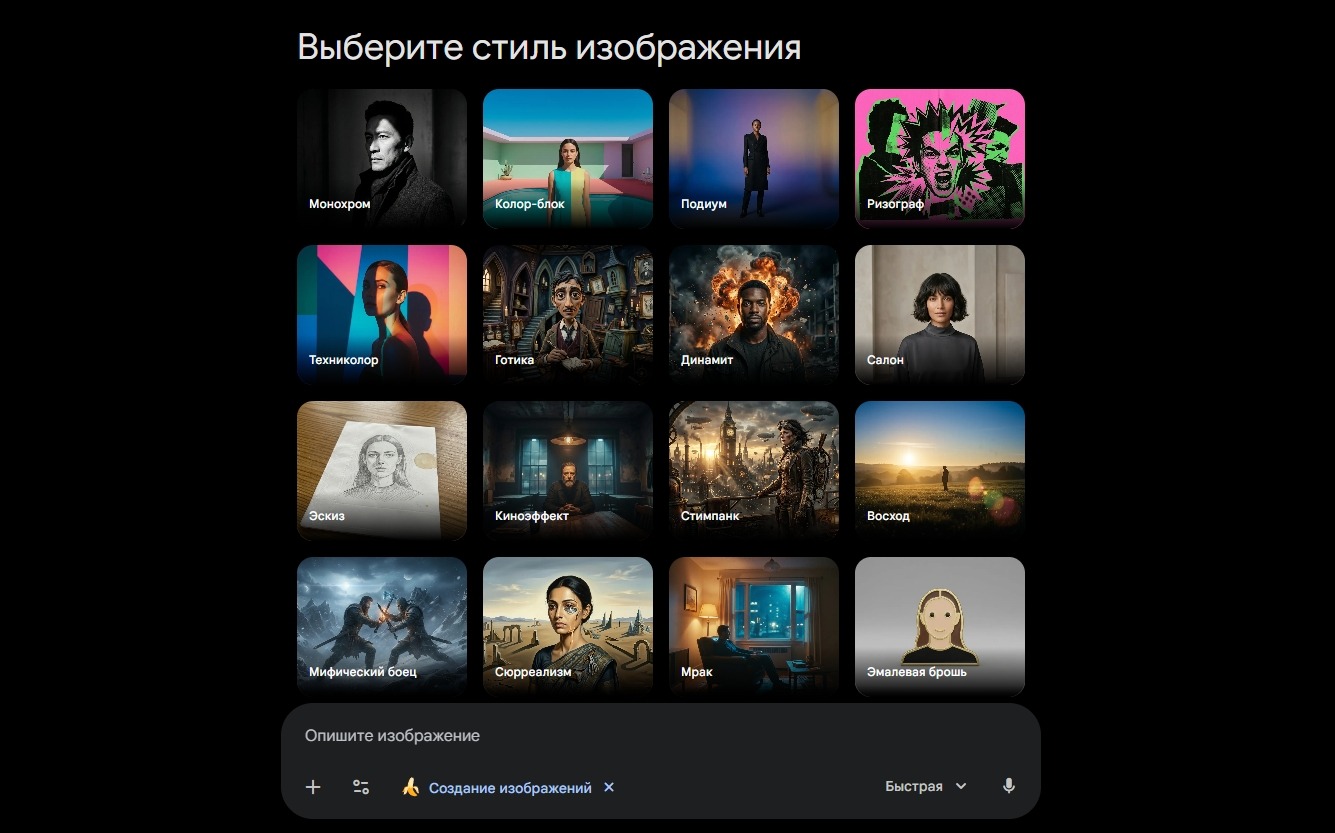

Nano Banana 2 offers to choose an image style right away

How Nano Banana 2 Is Better Than Alternatives

The generative model market is crowded right now: Midjourney, DALL-E 3, Stable Diffusion, Ideogram, and a dozen more competitors. You might wonder, why does Google need yet another model?

Most popular image generators are standalone products living in their own ecosystems. Midjourney works through Discord, DALL-E is built into ChatGPT. Nano Banana 2, however, is integrated directly into Google’s ecosystem — from search to Workspace and Android. This means image generation could become as basic a function as spell-checking.

In terms of image quality, Nano Banana 2 is already comparable to market leaders. The model is particularly good at photorealistic portraits, product photography, and architectural visualizations. However, in stylization for painting or anime, it still falls behind Midjourney, but Google promises to close this gap in upcoming updates.

That said, the main advantage isn’t in the quality of individual images but in speed and scalability. For businesses that need to generate hundreds of images per hour (for example, for product cards in an online store), the difference between 5 seconds and a minute per image isn’t a trivial matter — it’s a question of economics.

Nano Banana 2 created this image in five seconds

What Is the Nano Banana 2 Neural Network For

Google positions Nano Banana 2 primarily as a tool for developers and businesses. Access to the model will be provided through the Vertex AI API, meaning any service can integrate studio-quality image generation into its product. For regular users, the model is already available in the Gemini chatbot and Google AI Studio.

Interestingly, Google is also betting on content safety. Nano Banana 2 has a built-in SynthID watermarking system that invisibly marks every generated image. This means it will be possible to programmatically distinguish a real photo from a neural network-generated one, even if the difference is invisible to the naked eye.

The generative AI market is changing so fast that any “impossible” turns into “already working” within a couple of months. Nano Banana 2 is yet another proof of that: studio quality, real-time speed, and integration into the world’s largest digital ecosystem. All that’s left is to see how competitors respond.